How Much AI Due Diligence Is Enough? A Risk-Based Approach for CPAs

May 01, 2026

Opportunities to use artificial intelligence (AI) are expanding quickly across the tools CPAs already use. As vendors add AI features, firms face a familiar challenge: adopting useful innovation while managing risk.

Due diligence is not optional. The real question is:

How much diligence is enough for this situation?

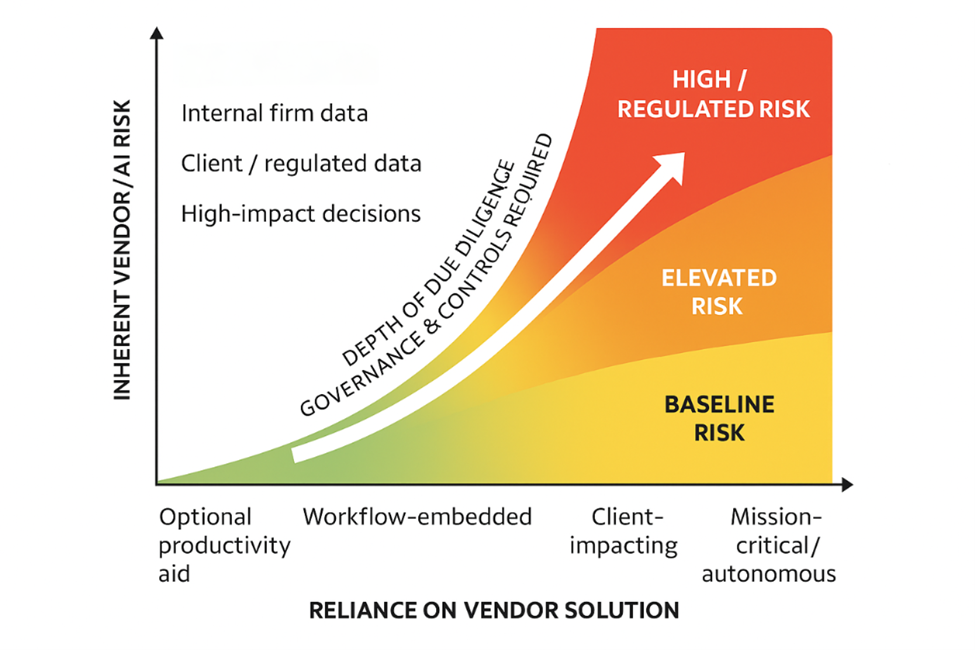

Implementation of AI solutions comes in various forms. Even if a CPA has no intention of participating in the AI movement, software providers are integrating AI functionality into the tools CPAs use daily. The accessibility of AI tools to accounting staff also creates opportunities and risks for organizations. Just like any vendor relationship, movement toward using AI must be accompanied by thoughtful due diligence designed to protect a CPA’s clients, organizations, stakeholders and data. A helpful way to apply the guide is to group AI use cases into three exposure lanes: baseline, elevated and high.

The AICPA’s AI Solution Due Diligence Guide for Accounting Firms was designed as a practical framework to help firms evaluate AI-enabled solutions. Importantly, it makes clear that not every question applies to every tool; due diligence should scale with risk — not hype nor firm size. It is also a practical guide for CPAs in private industry and academia. Good AI governance is not limited to firms in public practice.

Lane 1: Baseline Risk

If you can explain where the data goes, confirm it is not used for model training, and set clear internal usage guidance, additional diligence is typically not necessary at this level.

These are internal-use cases that do not involve client data or regulated workflows. Examples might include:

- Marketing drafts

- Internal brainstorming

- Research summaries without personally identifiable information

- Productivity assistance

At this level, the AICPA AI Solution guide “Quick Start” section on the top five things to verify first is usually sufficient (see page 2 of the guide). As a company evaluating AI solutions, you should understand:

- Where your data goes

- Whether it is used to train the underlying models

- Whether third-party large language model (LLM) providers are involved

- What happens to data after the trial period ends or the contract expires

Although comprehensive due diligence is not necessary with low-risk exposure, you need to be aware of how the platform works and review basic documentation to understand the system used.

For low exposure, completing the Quick Start review and setting clear internal usage guidance is typically enough.

Lane 2: Elevated Risk

At this stage, diligence is sufficient when you can clearly describe data handling, validate outputs through testing, and demonstrate that appropriate controls and human oversight are in place.

This is where most firms will operate when deploying an AI solution. Examples include:

- AI embedded in tax or audit software

- Tools accessing client documents

- AI drafting client deliverables subject to human review

- New AI features from existing vendors

In Lane 2, client data is involved, increasing the need for security oversight. Human oversight remains, but AI is influencing workflow and possibly client deliverables.

At this level:

- Complete the full Quick Start review

- Request and review a SOC 2 report (preferably Type 2) and confirm scope.

- Review data flow documentation.

- Confirm data retention and model training boundaries.

- Conduct validation testing using anonymized or firm-controlled data.

You should also selectively use the guide’s “Expansive Questions to Ask Vendors” (on page 3), focusing on:

- Data privacy and ownership

- Auditability and explainability

- Security and access controls

- Accuracy and reliability

You don’t need every question in the document. But you should be able to clearly explain how the tool handles data and how outputs are validated before use.

Lane 3: High Risk/Regulated Risk

For high‑exposure use cases, diligence should be documented and defensible at the partner, board or regulatory level, with clear ownership for ongoing monitoring and review.

Now, AI is materially affecting compliance, assurance or regulated decision-making.

Examples include:

- AI influencing audit procedures and conclusions

- Automated risk scoring

- AI use in regulated industries

- Autonomous AI agents executing functional workflows

At this level, firms should work through these guides:

- Quick Start

- Expansive Questions to Ask Vendors

- Due Diligence Evidence Checklist (on page 7)

The checklist includes items such as SOC 2 reports, Service Level Agreements, data processing agreements, sub-processor lists, incident response plans and data-flow diagrams.

Documentation, governance and ongoing monitoring matter here. AI add-ons from existing vendors should be evaluated as separate products — a long-standing relationship does not eliminate new AI risk.

A Governance Lens for CPAs

It is worthwhile to remember that the level of diligence scales with risk — not firm size. A small firm using AI in audit engagements may require more review than a large firm using AI strictly for marketing content.

The AICPA guide is intentionally layered so firms can scale their evaluation appropriately. As CPAs, we already think this way. We scale procedures based on risk. AI vendor due diligence should follow the same principle, with someone accountable for determining the appropriate level of review based on the use case.

AI will continue integrating into the tools we use every day. The goal is not to slow innovation. The goal is clarity:

- Where does the data go?

- How is AI being used?

- What safeguards exist?

- How are outputs validated?

CPAs are entrusted with diligence, independence and objectivity. AI does not reduce that responsibility — it simply shifts where we apply it.

By applying a risk-based mindset and using the AICPA guide proportionally, Virginia CPAs can adopt AI confidently while protecting the clients and organizations we serve.

This guide was developed by the VSCPA Technology & Innovation Advisory Council. The Council provides strategic direction to the VSCPA Board of Directors on technology initiatives within the accounting profession. Question about the Council? Contact VSCPA Senior Vice President, Strategy & Innovation Tina Bates, CAE.

Learn more at the CPA AI Summit!

This one-day in-person AND virtual conference goes beyond the basics to deliver practical, real-world AI insights you can apply immediately. Details:

When: Oct. 13, 2026, 8:30 a.m. – 5 p.m.

Where: Capitol One, McLean, Va.

Credits: 7.5